Search: gpt3 elon musk writes destroy humans 0 results found

MOST POPULAR

FEATURED STORIES

Top 10 Multiplayer Shooters with the Most Players in 2026

Free Fire MAX Redeem Codes March 31, 2026: Get Free Diamonds with 100% Working Codes

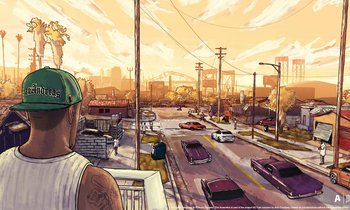

GTA 5's Timeless Graphics: How Rockstar's RAGE Engine Keeps It Looking Great in 2026

Honkai Star Rail Sparxie 4.0 Hypercarry Guide